Introduction: Why Evidence Matters More in 2026

The recent 2025 and 2026 guidance updates from U.S. Citizenship and Immigration Services (USCIS) now explicitly recognize types of evidence that barely existed when many O-1A rules were first written. Digital publications, podcasts, open-source contributions, and early-career awards tied to emerging technologies like AI and machine learning are expressly included under the updated policy.

Despite this broader recognition of modern evidence formats, officers appear increasingly attentive to credibility, independence, and real-world impact. So, what documentation helps to avoid RFEs or denials?

PassRight is a technology and project management platform, not a law firm. We do not provide legal advice or representation. All legal strategy and advocacy are provided by the independent immigration attorneys at Sapochnick Law Office.

What Changed in 2026?

Neither the O-1 visa category rules nor the eight criteria have changed in 2026; rather, USCIS has updated its explanations and applications to include modern evidence formats for AI, machine learning, and other emerging technologies. USCIS is increasingly willing to evaluate collaborative, non-traditional innovation using field-specific proof.

Open-source work is the clearest example of this scope. Contributions to software repositories, data and modeling, designs and frameworks, protocols and methods, or other technical standards are non-traditional kinds of evidence that USCIS will consider as major-significance contributions, provided that qualitative standards are met.

Early-career awards and recognition

A second major shift is the updated guidance that beneficiaries need not be “senior” in their fields to demonstrate sustained recognition. This is especially relevant to early-career founders and researchers in startup and tech fields. Practitioner summaries corroborate this, highlighting that “awards for excellence” do not require receipt at an advanced stage of a beneficiary’s career. This still disqualifies non-professional merit, like student awards, while allowing for field-relevant early-career achievements; for example: competitive fellowships, admittance into highly selective programs, major hackathons with rigorous screening, or recognized research prizes.

Digital publications, podcasts, and online media coverage

The key phrasing of the “published material” criterion remains that the material must be directly and explicitly “about” the beneficiary and relate directly to their work. The clarification that published material is not limited to legacy print media is one of the most helpful of the updates for tech professionals. USCIS must consider industry-specific or major online publications as eligible to meet the criterion, as well as audio/video coverage with supporting transcripts or documentation as part of the evidence.

Overview of O-1A Visa Evidentiary Criteria and 2025/2026 updates

At its core, the O-1A classification has always been, and will remain, evidence-driven. Under 8 C.F.R. § 214.2(o), applicants must demonstrate extraordinary ability by meeting at least three of eight evidentiary criteria, or by presenting comparable evidence when a given criterion does not readily apply. The 2025/2026 updates clarify how modern, digital-first achievements may satisfy the traditional criteria when properly documented. The examples below reflect traditional interpretations of the criteria alongside field-appropriate interpretations, which have been explicitly deemed acceptable since the updates.

The eight O-1A evidentiary criteria: traditional vs. updated examples (non-exhaustive)

| O-1A Evidentiary Criterion | Traditional Evidence | Modern 2026 (Digital & Tech-Focused) Evidence |

| Nationally or internationally recognized awards | Senior industry awards; lifetime achievement prizes | Selective early-career awards; competitive hackathons; prizes for AI/LLM research |

| Membership in associations | Invitation-only professional societies | Selective accelerator programs; technical fellowships with merit-based selection |

| Published material about the beneficiary | Prominent newspaper features; print magazine profiles | Digital publications, podcasts, video interviews; reputable online media with large audience or following |

| Participation as a judge of the work of others | Conference judging panels; grant review committees | Peer-review of academic papers, reviewing open-source pull requests; hackathon judging |

| Original contributions of major significance | Patented inventions; proprietary systems | Open-source projects with adoption, widely used AI models; contributions to core frameworks |

| Authorship of scholarly articles | Peer-reviewed academic journals | Trade and industry publications with editorial review; thought-leadership in AI/technology |

| Critical or essential employment | Executive roles at major companies | Lead engineer or researcher roles at distinguished startups or AI labs |

| High salary or remuneration | Executive compensation packages | Above-market compensation; equity tied to specialized expertise |

The quality of the evidence is far more decisive in a formal evaluation than the number of criteria in a submission. Officers will evaluate the strength of each criterion, not simply assess whether they are technically met. Across all evidentiary categories, the following qualitative standards matter most:

- Significance: Did the work meaningfully influence a product, industry, or field of expertise?

- Selectivity: Were awards, roles, or professional opportunities competitive and merit-based?

- Sustained impact: Does the record show ongoing recognition rather than a single success?

Narrative framing of evidence around these qualitative considerations will continue to carry weight in 2026, particularly for applicants whose startup or early-career impact is measured through adoption, engagement, or influence rather than seniority or tenure. “Evidence mapping” frameworks that some practitioners use to systematically link modern achievements to specific regulatory criteria can be an effective way to organize evidence, provided they are qualitatively robust.

Leveraging Digital Media & Online Presence

While visibility in digital media is increasingly important in O-1A petitions, not all online exposure is equally persuasive. Since the 2026 update, the key question is no longer whether digital media counts, but which types of digital coverage meet USCIS standards.

Credible digital publications vs. self-promotion

Independent media coverage that reflects third-party recognition can support the “published material about the beneficiary” criterion. To distinguish qualifying publications, the critical question is: who controls the content?

Generally, strong media coverage appears in:

- Independent digital publications with established editorial processes

- Professional or industry-specific outlets with a defined readership

- Platforms where the applicant does not control publication or approval (i.e., not self-promotion).

Podcasts, video interviews, and conference presentations

Video and audio recordings can also qualify as evidence when they function as independent media coverage, not promotional material, as discussed above. The credibility of the media is more important than its format.

Podcasts, interviews, or presentations are strong when they feature:

- A third-party host with recognized expertise or audience reach

- Editorial discretion over guests and topics

- Substantive (rather than superficial) discussion of the applicant’s work, research, or contributions

Short clips, informal livestreams, or company-hosted webinars are less persuasive unless they are part of a broader, independent media ecosystem that satisfies the above.

Blogging, trade journals, and industry-focused articles

To be clear, articles authored by the beneficiary are evaluated under a separate O-1A criterion and must not be conflated with “published material about the beneficiary”, which requires third-party editorial control and coverage. Under either criterion, blogs and industry publications occupy an important middle-ground between academic journals and mainstream media. USCIS has long recognized that “scholarly” does not necessarily mean “peer-reviewed” in the academic sense, especially in applied fields like engineering, technology, and AI.

Articles published in trade journals or professional outlets may qualify when they:

- Serve a knowledgeable, field-specific audience

- Apply independent, editorial review standards

- Focus on technical, professional, or industry-relevant subject matter

While academic journals rely on formal peer-review, trade publications typically rely on editors with subject-matter expertise to vet content. Blogs and trade publications may therefore qualify as credible publications when they are not self-promoting. In short, qualifying documentation reflects a defined editorial process and demonstrates professional recognition or authorship within the field.

Open-Source Contributions and GitHub Evidence

Open-source work has become one of the most common and misunderstood forms of evidence in recent O-1A petitions. Since the 2026 update, there is no question whether open-source contributions count, but how they can be leveraged to demonstrate significance that aligns with USCIS expectations remains unclear.

Why open-source work counts as original contributions

Open-source contributions can support the “original contributions of major significance” criterion when they demonstrate impact beyond routine participation or contained influence within a company. The key is showing that the work materially advanced a field, technology, or professional community and not merely that code was published publicly.

This is particularly relevant in fields like AI, machine learning, and software engineering, where innovation is often collaborative rather than proprietary, iterative rather than tied to a single publication, and distributed across platforms rather than owned by one organization. Accordingly, open source must qualify when it demonstrates field-level impact.

Metrics to collect: stars, forks, adoption, & dependency data

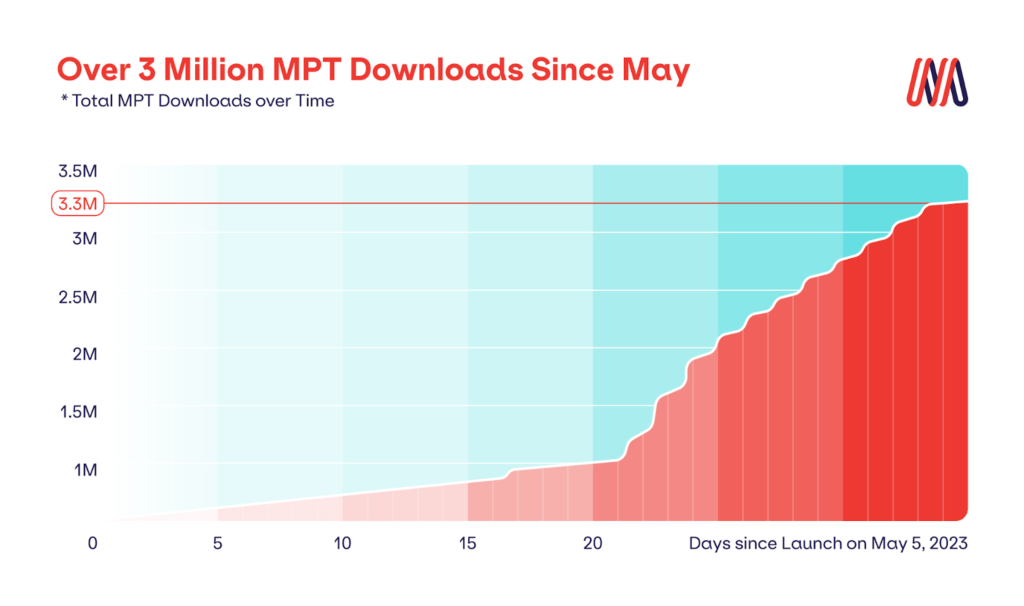

Although quantitative metrics are useful for documenting impact, for demonstrating the significance of open-source work, metrics must illustrate use and adoption to be persuasive evidence, and not only popularity.

Persuasive open-source metrics include:

- Stars and forks, which signal peer interest and engagement

- Download counts or package installations

- Dependency data, showing the projects or products that rely on the open-source work

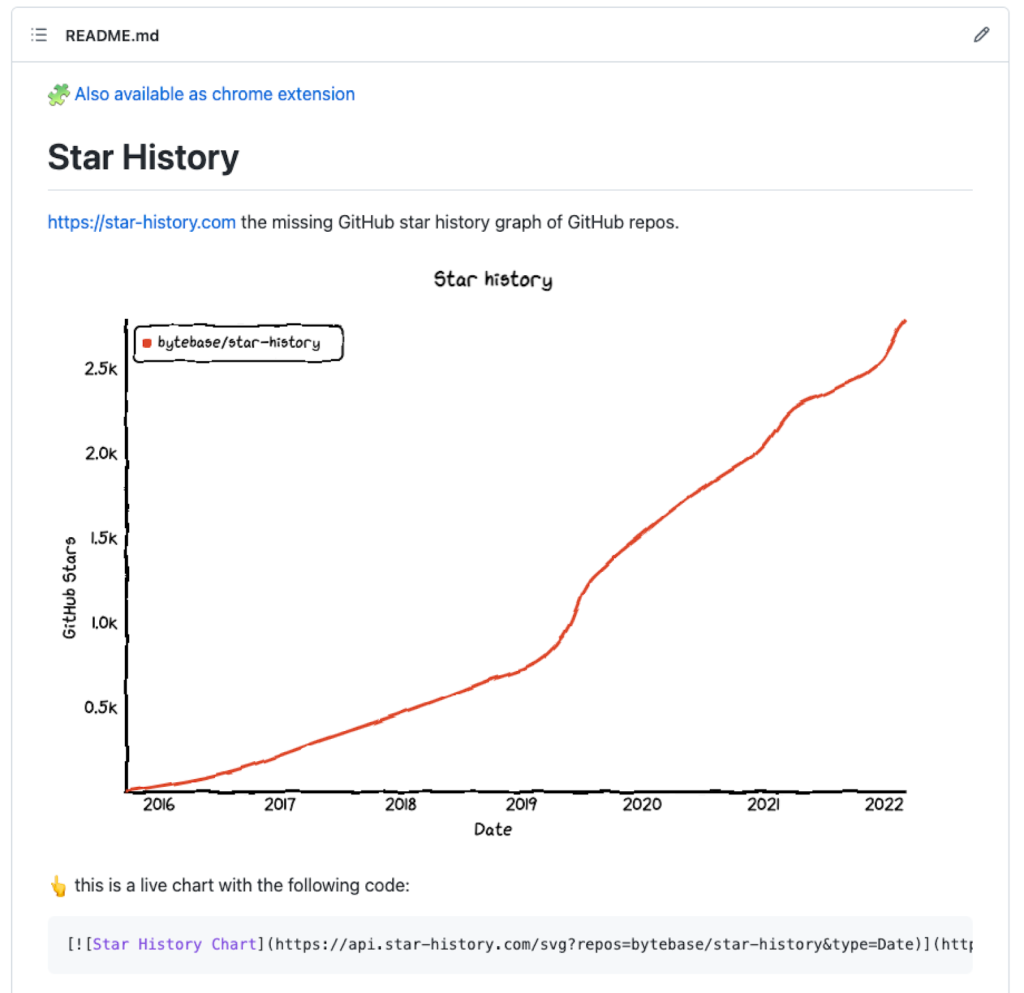

Source: https://www.star-history.com/blog/add-a-live-star-history-chart-to-your-github-readme

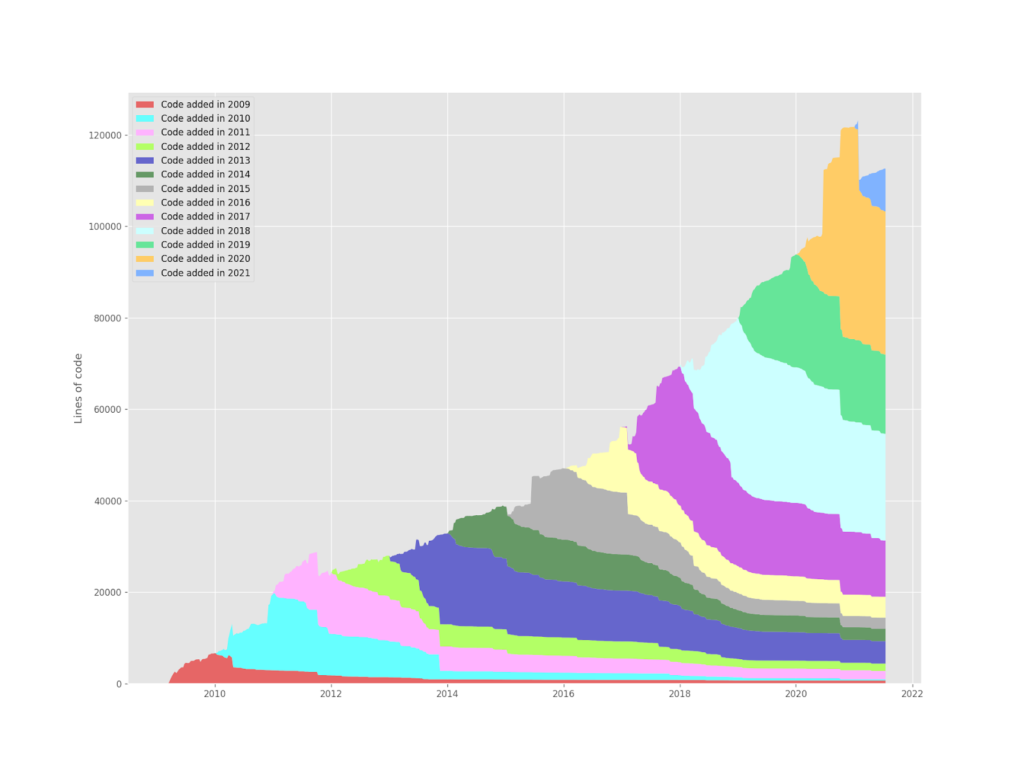

How these metrics change over time offers compelling evidence of impact, influence, and overall significance. Growth trends demonstrate sustained relevance and increasing influence of the contribution.

Corporate or institutional adoption is especially compelling. Evidence that a company, research group, or widely used product depends on open-source work greatly strengthens the argument that the work is of major significance.

Example (anonymized case):

A machine learning engineer relied on a GitHub repository with documented adoption by two enterprise AI platforms, supported by strong usage indicators (e.g., stars/forks and dependency tracking across dozens of public projects). The petition included exhibits showing adoption over time and independent letters explaining why the architecture mattered in practice, not just that it was popular. The case was approved without an RFE.

Source: https://github.com/erikbern/git-of-theseus

Note that metrics alone are rarely sufficient for contextualizing significance. Strong open-source evidence pairs quantitative data with corroborating documentation to explain why the numbers matter. Letters by recognized experts in the field that clearly explain the influence and impact borne out by the metrics are especially effective.

AI Publications & Digital Impact

AI has not only changed technical work but has also shifted how expertise is communicated and recognized. Many O-1A applicants in AI, machine learning, and related fields demonstrate authorship and influence through digital publications, trade journals, and practitioner-led outlets rather than traditional academic journals. The key in 2026 is understanding how USCIS will evaluate these materials, and how AI tools can be strategically leveraged without undermining credibility.

Validating trade and industry publications vs. academic journals

USCIS has long acknowledged that “scholarly” evidence is field-dependent. In evolving areas like AI, machine learning, and software engineering, professional recognition often appears outside of academic journals, like industry publications, technical blogs, and other practitioner-focused outlets.

For O-1A purposes, the distinction is not academic versus non-academic, but whether the publication reflects credible authorship and professional recognition. Publications can support the authorship criterion when applicants clearly demonstrate:

- Editorial vetting: content is reviewed or selected by editors with subject-matter expertise

- Expert audience: readers are professionals or specialists in the field

- Substantive content: articles meaningfully engage with technical or professional topics

In many AI-focused careers, these publications are more representative of real-world impact than traditional academic journals, provided their standards are clearly documented.

Further, using AI tools can be a legitimate, supportive publication strategy when used thoughtfully. Leveraging AI to identify relevant publication outlets or industry platforms, or assistance with drafting outlines and clarifying technical explanations, can be effective. The underlying expertise and perspective must come from the applicant.

Ethical considerations: authenticity and editorial independence

Articles that sound too much like AI risk undermining credibility. Maintaining originality, reliable authorship, and editorial distance helps ensure that AI-assisted publications strengthen, rather than weaken, an O-1A visa application.

Early-Career Recognition & Awards

One of the most consequential clarifications in the 2025/2026 guidance is that extraordinary ability does not require decades of experience. This is meaningful for early-career professionals, students, and young founders: their recognition can still be persuasive, provided it reflects genuine distinction within competitive fields. The updates clarify how recognition and awards requirements apply to early-career professionals.

Importance of early-career awards and fellowships

Early-career awards support the O-1A criteria when they demonstrate selectivity, competitiveness, and peer recognition, beyond mere participation. Petitions should specify the number of applicants versus the number selected, who administered or judged the award, and, ideally, under what award criteria.

For many young and early-career professionals, cumulative recognition often tells a stronger story rather than a single award. When taken together, multiple smaller achievements can effectively demonstrate extraordinary ability. Evidence should be strategically organized so that USCIS officers can easily see how awards, judging activity, professional roles, and publications reinforce each other across criteria.

Building an Evidence Matrix for 2026 Petitions

As O-1A evidence becomes more digital and diverse, effectively organizing evidence in a petition is as important as clearly expressing its substantive impact. In 2026, many strong petitions will succeed not because the applicant has more achievements, but because those achievements are clearly and consistently mapped to the regulatory criteria. This is where an evidence matrix becomes invaluable.

Mapping achievements to specific criteria and identifying gaps

An evidence matrix is both a useful planning and audit tool. It is a structured document that lists each O-1A criterion and connects specific evidence to each, sometimes including rationale and strategic notes when petition drafting. Used early, it helps applicants understand which criteria they already satisfy and where evidence can be strategically developed. Importantly, this approach helps to map out how a single achievement can often support multiple criteria.

Documentation best practices and organization

The quality of documentation decides the strength of the evidence. Officers review large volumes of material under time constraints, so structure and clarity are essential. Consistency is key: dates, roles/titles, metrics, and descriptions should align across exhibits, reference letters, and summaries.

Passright’s “O-1 Visa for AI Founders in 2025: Evidence Mapping Under Heightened Vetting” provides additional context on why early organization and clear mapping are especially important under heightened review standards.

Common Pitfalls and Quality Standards

With this expanded evidence, USCIS has become more exacting to quality and credibility. Many RFEs and denials stem from how the evidence is presented, sourced, and explained; not from the beneficiary’s lack of achievement.

Avoiding self-promotional or paid media

One of the most frequent weaknesses in O-1A petitions is reliance on self-promotional content. Personal blogs, company websites, and self-published articles carry little weight since they lack independent editorial control. Articles that appear advertorial, media placements obtained through sponsorships or paid PR services, and content published on platforms controlled by the beneficiary or their employer are common red flags that can trigger an RFE.

Be mindful that officers assess the credibility of digital and traditional outlets, focusing on independence and editorial discretion. Lesser-known outlets can also be persuasive if their role and audience are clearly explained. It is best practice to include brief explanations of outlets’ focus, readership, and editorial processes. Providing this context upfront can prevent misunderstandings and reduce follow-up questions and RFEs.

Early-career mistakes: under-documented peer-review or conference activity

Relying on peer-review, judging, or conference participation can effectively support petitions when they are effectively documented.

Common mistakes include:

- Missing invitation or confirmation letters

- Lack of explanation for how reviewers or judges are selected

- Failure to document the relevance of the beneficiary’s expertise to the event

- Unclear scope of the activity (one-time vs. recurring, minor vs. substantive)

In practice, peer-review and conference activity only carry weight when clearly documented as selective, expertise-based, and substantive. Without this context, otherwise legitimate achievements risk being discounted as informal participation rather than appropriately professional recognition.

Conclusion: Strategic Takeaways for 2026 Applicants

The 2025/2026 updates broadened acceptable evidence but raised the bar for quality and credibility. A concise, well-documented record mapped clearly to the criteria will always outperform a large, unfocused one.

Most applicants fail because they collect evidence first and develop a strategy later. At PassRight, we reverse this process to eliminate wasted effort. Our workflow begins with legal strategy setting by our affiliated independent immigration attorneys. Once the legal framework is established, our platform provides the tools to help you target and collect the specific evidence that fits that strategy.

This proactive approach ensures that you aren’t guessing what the lawyer needs, you are building a high-impact portfolio from day one.

Ready to set your strategy? Schedule a call with our customer care team. They will explain our strategy-first process and connect you with an attorney to map out your 2026 O-1A path.

Need help with your case? Schedule a call with our customer care team. They’ll be happy to discuss your needs and connect you with an immigration attorney.